Minimum TTLs

This note explores the use of short TTL's for failover (short TTLs have no role to play in load-balancing) and seeks to answer the apparently simple question - what is the lowest sensible TTL? The related, and far more important question, "Is a short TTL the best failover strategy?" is dealt with in another article (hint: multiple RRs are a faster and more reliable strategy).

In answering the TTL question three assumptions are made:

Failover is designed to provide an alternative IP address in the event of a server/service or access route failure. That is, the Authoritative DNS will replace the IP address in the A RR pointing to the service when local equipment detects a failure. The failover method could be called, for want of any better terminolgy, single-service-ip-with-dynamic replacement. Catchy.

The service for which failover is required is TCP based, for example, HTTP, HTTPS or FTP and is initiated typically from a web browser. TCP error recovery methods are defined within the protocol whereas UDP error recovery methods are application based and therefore subject to multiple variables.

The majority of clients accessing the services are based on Microsoft's Windows series of OSes (simple fact of life - over 90% are).

There is no doubt that local equipment can efficiently and effectively monitor the health and availabilty of important services and rapidly replace failing services by switching in a backup/hot standby and changing the IP address in a DNS A RR. However, what such local equipment cannot do is to monitor every communication path from every user. If a communication link is out for an temporary period then significant numbers of users may be affected while the local equipment continues to see a perfectly functioning service. Multiple IPs (with geographic separation) will solve this problem.

<Prejudice Warning> The author of this note considers low - sub one minute - TTLs to be inherently evil for 3 reasons:

Load: They place a huge - and unnecessary - load on domain name servers and the DNS infrastructure - specifically caching name servers in service provider locations.

Reliability: The reliability level of the DNS becomes the limiting factor in service availability.

Cache Poisoning: Since each DNS access offers the possibility of cache poisoning it seems reasonable to limit that possibility as much as possible. Certain well known attacks such as documented in this paper which represent modest risk at TTLs of 3,600+ seconds (1 hour +) suddenly become serious with a TTL of, say, 5 seconds such that there is a 10% possibility of poisoning after 2 minutes and 90% after 40 minutes. Not comforting numbers.

Note: During the course of running some experiments, for this and another article, some bizarre artifacts of a DSL modem and/or DNS proxies were observed. The net result was that invalid DNS results were returned when similar DNS requests were issued within 15 minutes. Another reason to keep TTLs high?</Prejudice Warning>

The Process of a Name Lookup

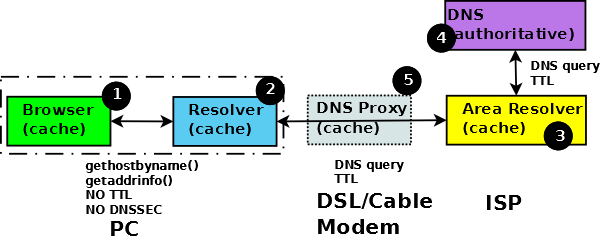

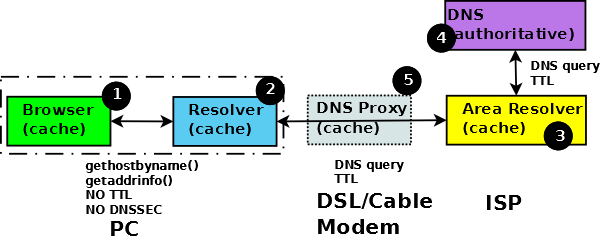

Figure 1 shows why TTLs of less that 30 minutes have absolutely no effect on failover performance for current site users when using MSIE but shorter TTLs will have an impact on new site visitors. Nominally Firefox users would reread the DNS every 60 seconds but since it takes approximately 3 minutes for the browser to finally give up and notify the user of a failure the minimum sensible TTL for Firefox users would be 3 minutes.

To explain this reasoning it is necessary to understand the process by which IP addresses eventually end up in a browser and which is illustrated in Figure 1 below.

Figure 1 Caching effect in browsers

The crux of the problem is caching - not in the DNS servers but in the browser. Figure 1 shows the process used to obtain an IP address by a browser.

The browser requests the IP address using a POSIX standard resolver library function (1) - either gethostbyname() or the newer version getaddrinfo() or in the MSIE case a non-POSIX, but functionally equivalent, call is used.

The local resolver (2) initiates a standard DNS query to its locally defined DNS (3) - typically located at a service provider - which, being a recursive DNS, will initiate one or more DNS queries to the DNS infrastructure finally resulting in a query to the domain owners authoritative name servers (4).

The responses to DNS queries contain the full DNS RR(s) including the TTL. Thus, the response paths 4->3 and 3->2 will contain the TTL data, say, 5 seconds. The library function (path 2->1) response is not specified to contain TTL data (Microsoft's non-POSIX functions being modelled on the POSIX versions, similarly do not return TTLs values). The TTL information at this point is effectively lost.

The browser - in order to speed up performance - maintains a cache of results which in the abscence of any other information is timed out after 30 minutes (MSIE < 10?) and 1 minute in the case of the Mozilla family and MSIE (10+) - configurable in both families. Thus, the IP address will not be replaced, irrespective of the TTL value, for 1 to 30 minutes for existing site visitors. Finally, we need to consider the time the browser and TCP/IP stack between them take to decide when a site is unreachable. In the case of MSIE this is around 2 minutes and in the case of Firefox around 3 minutes (the difference is entirely to do with retry strategies adopted by the respective browsers).

The problem is entirely one of mismatched objectives. The browsers wish to optimise performance by mimimising external accesses; whereas the resolver library system calls (whether POSIX or MS) wished to mimimise complexity by reducing the amount of information returned. However, by not returning the TTL from the DNS records the system calls eliminated the only basis on which a sensible DNS cache strategy can be based. Instead we have a kluge.

Notes:

Update: The original tests were done in 2006 with MSIE 6. MSIE 7, 8 and 9 use the same settings and strategy for DNS cache timeout. MSIE 10, 11 and Edge strategy and DNS caching defaults are apparently (unverified) 1 minute but can be modified using a registry variable.

The increasingly common DSL/Cable modem (5) almost always contains a DNS Proxy whose functionality varies from the relatively benign (transparent) to the downright intrusive (preventing queries for a period - in some case 15+ minutes - by serving from a cache irrespective of the actual TTL value) in order to reduce loaad on the ISPs infrastructure.

Clearly new visitors whose browser has no cache entry will obtain a new IP address using the same process defined above and therefore the short TTL will have the desired effect of providing the up-to-the-second IP address.

It has been pointed out that in Intranet configurations the Proxy/DSL modem is typically not present. Other than having, perhaps, more predicatble load characteristics and avoiding unpleasant Proxy quirks it's not clear that this will have any other impact on the TTL performance.

So, the answer to the question "what is the most sensible minimum TTL?" depends on whether you think it more likely that the service will break during a visit - in which case 180 seconds (3 minutes - if all visitors are Firefox or current MS browsers) or 1800 seconds (30 minutes - if all vistors are older MSIE) seem reasonable values - or on the initial visit - in which case set the TTL to some very low value and spend serious bucks on DNS hardware.

Alternatively you could consider other failover strategies - such as multiple RRs which are generally faster, more tolerant to network failures and place much less load on the DNS infrastructure.

Problems, comments, suggestions, corrections (including broken links) or something to add? Please take the time from a busy life to 'mail us' (at top of screen), the webmaster (below) or info-support at zytrax. You will have a warm inner glow for the rest of the day.